Technical SEO refers to optimizing a website’s technical aspects to improve efficient crawlability and indexability. It encompasses various strategies and practices focused on enhancing the website’s infrastructure.

Any website should be able to focus on at least the basics of technical SEO aspects like XML sitemaps, Robotstxt, URL structures, and schema markup (structured data). Both e-Commerce giants have covered the basics but one (Daraz) does it better than the other (Sastodeal).

Here’s the technical seo analaysis of Daraz and Sastodeal.

Website Structure

Daraz is fully aware of optimizing for the technical SEO aspects during site development. Daraz’s multiple content type like product detail page (PDP), category listing page (CLP), product listing page(PLP), brand page, and store page are organized and interconnected very well.

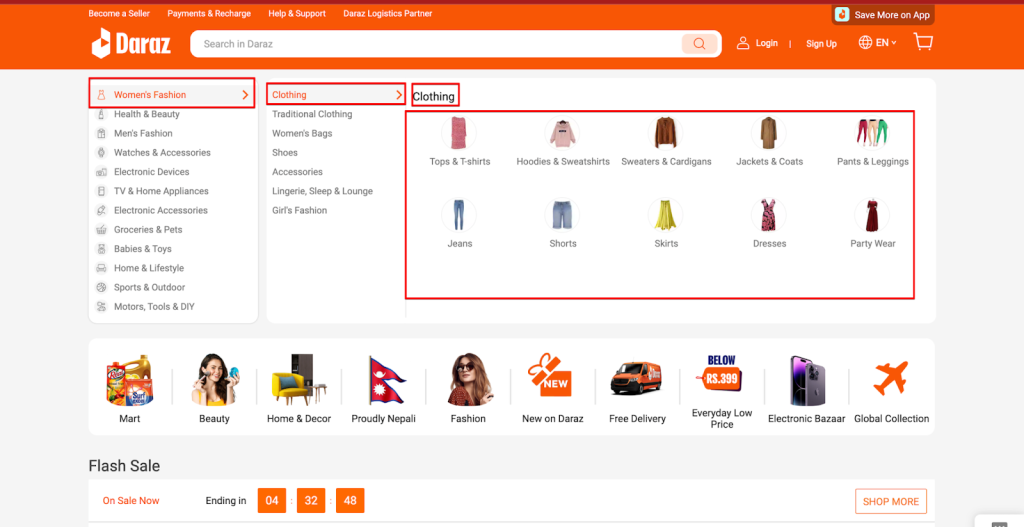

As customers of eCommerce platforms, the best thing for us is the user journey. Users can feel this while navigating the product categories: For eg; Women’s Fashion -> Clothing -> Jeans, Shirts & More.

- Women’s Fashion: Broad Category, which is simply a plain text

- Clothing: Category Listing Page (CLP), which includes all sub-categories that fall under the broad category of clothing.

- Jeans, Shirts & More: Product Listing Page (PLP), which includes specific products that fall under the sub-categories

Daraz has a clear and structured navigation system, with categories like Women’s Fashion, Health & Beauty, Men’s Fashion, etc. This helps users and search engines understand and navigate the products easily.

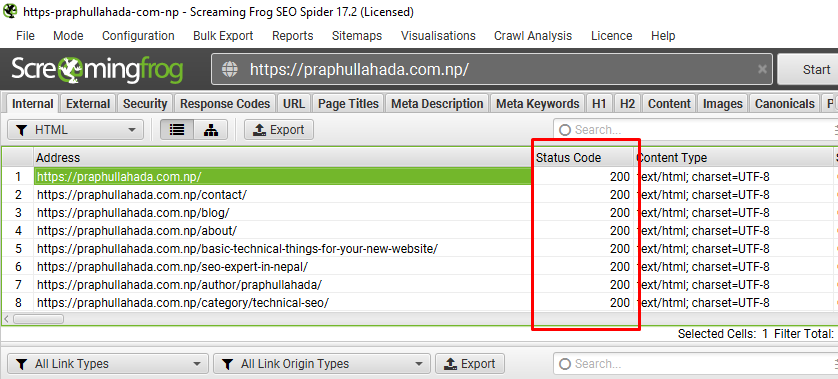

URL Structure

A site’s URL structured needs to be simple and yet should be able to carry out the meaning such as primary keyword or at least a short variation of it. And, Daraz’s URL structure is just that. This is how Daraz has structured URLs for different content types:

Product Details Page -> https://www.daraz.com.np/products/product-name/

Category Listing Page -> https://www.daraz.com.np/category-listing-name/

Product Listing Page -> https://www.daraz.com.np/product-listing-name/

Brand Page -> https://www.daraz.com.np/product-listing-name/brand-name/

Store Page -> https://www.daraz.com.np/shop/shop-name/

See how Daraz constructed URLs simply and logically that are most intelligible to humans (readable words rather than long ID numbers).

On the other hand, Sastodeal’s URL structuring is quite poor. Some examples of poorly constructed URLs are:

https://www.sastodeal.com/default/sd-fast/food-essentials/rice-rice-products.html

https://www.sastodeal.com/default/sd-fast/food-essentials/rice-rice-products/sd-336943-423-salesberry-5304.html

But, why are the above Sastodeal’s URLs poor?

- First and important one, the folder depth is way too far. This can have a negative impact on the crawlability and indexability.

- Second, the URLs are longer, unintelligible and don’t contain a descriptive keyword like Daraz did, which can negatively impact how Google understands the page.

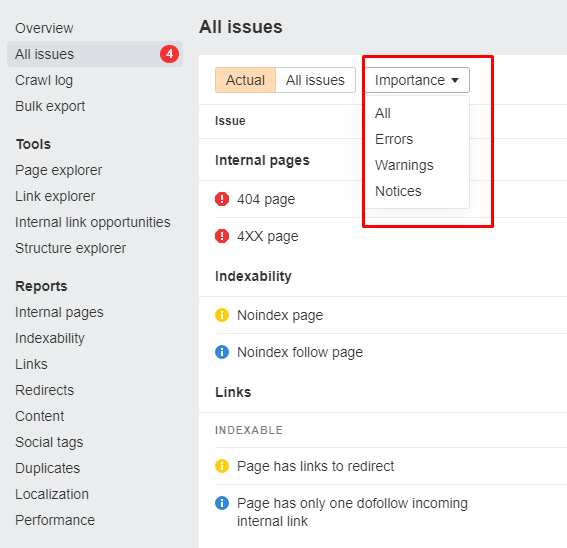

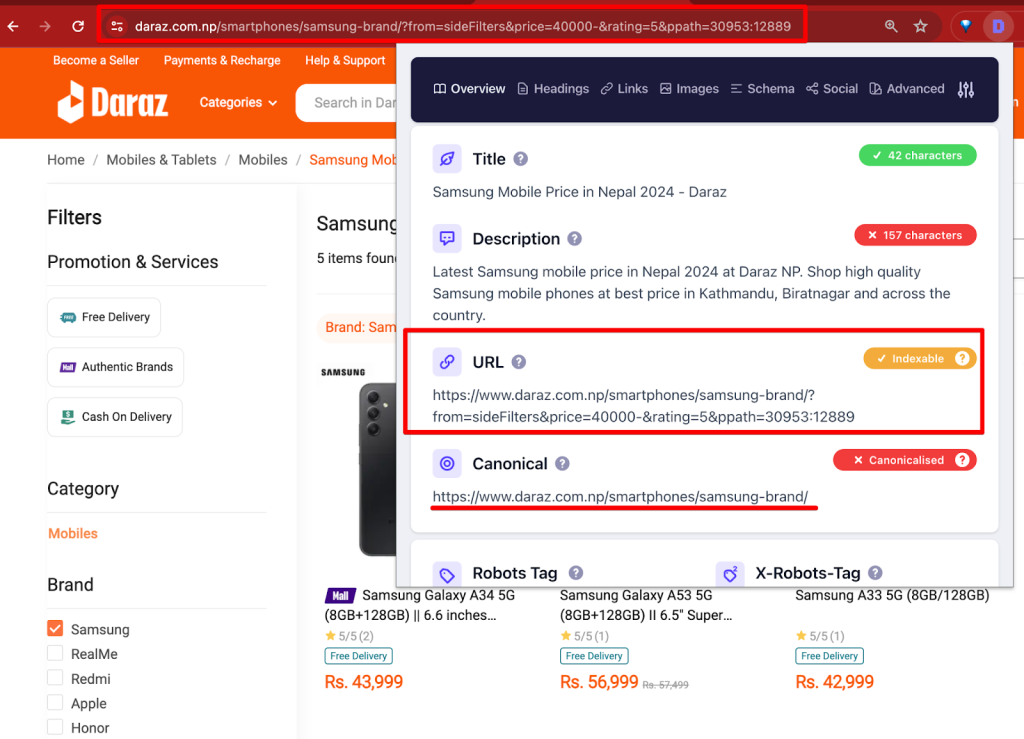

Canonicalization & Duplicate content

A product category can have multiple facets or filters that can cause duplicate pages. Likewise, a single product can have multiple variations like color, size and more which can cause massive duplicate page issues.

For instance, a product page t-shirt with a 3 color variation with 4 sizes option can have 12 independent pages. If the pages aren’t handled using canonicalization, massive duplicate page issues may negatively impact the SEO visibility and ranking.

So what to do in such cases? To help Google understand which variant is best to show in Search, we should choose one of the product variant URLs as the canonical (main) URL for the product.

This is where the canonical tag (aka “rel canonical”) comes into play. It is a way of telling search engines that a specific URL represents the master copy of a page. Using the canonical tag helps to prevent problems caused by identical or “duplicate” content appearing on multiple URLs. Essentially, it tells search engines which version of a URL you want to appear in search results.

Daraz is pretty great at handling duplicate page content issues and handled smartly using canonicalization techniques.

Quick Note: It’s important to note that the canonical tag is generally a hint, not an absolute directive, to search engines. While search engines often respect canonical tags, they may ignore them in certain cases if they think a different page is more appropriate.

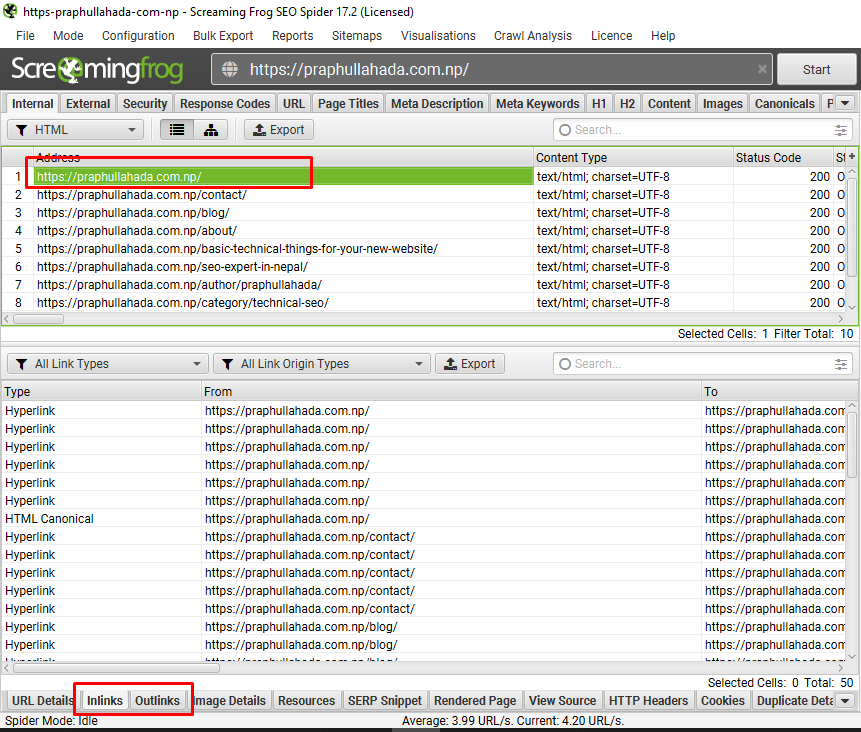

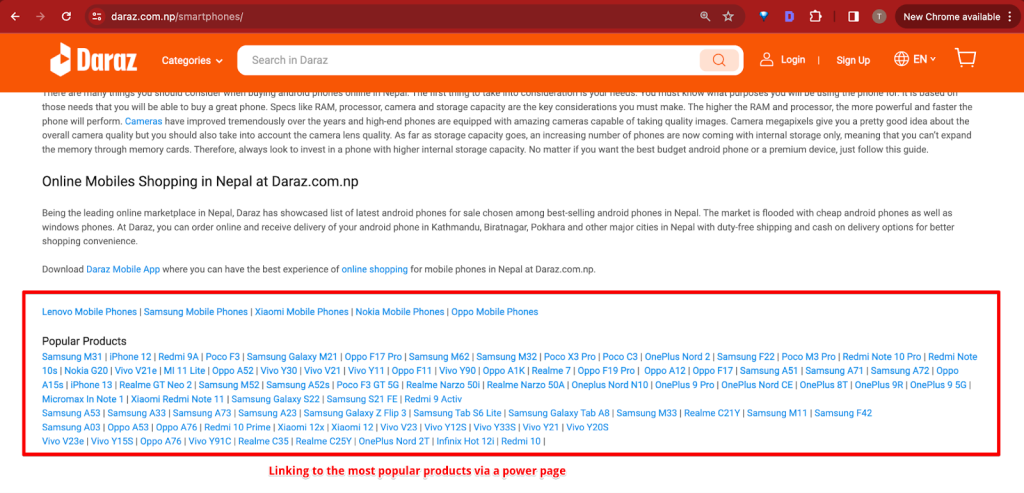

Internal linking

Search Engines like Google uses links as the strongest signal when determining the relevancy of pages. The internal links help the search engine bots to find the new pages as well.

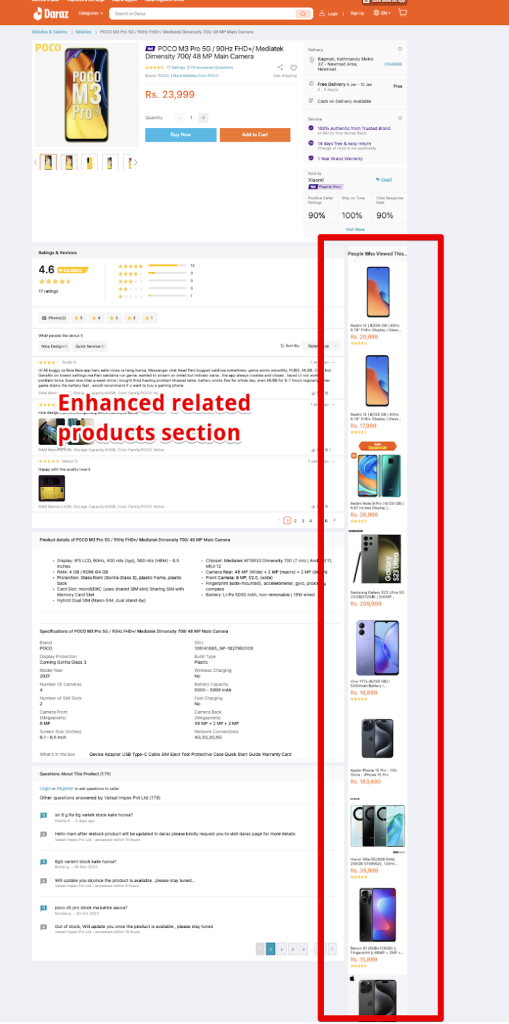

The “Related Products” section on an eCommerce website plays a crucial role in SEO. Why? Because of couple of reasons.

First, it enhances user engagement by keeping visitors on the site longer as they browse through additional items.

Second, it creates an opportunity for internal linking, which is vital for SEO. By linking related products, the website can spread link equity and help search engines better understand the site’s structure and content relevance.

Third, it aids in the discovery of more pages by search engine crawlers, increasing the likelihood of additional pages being indexed.

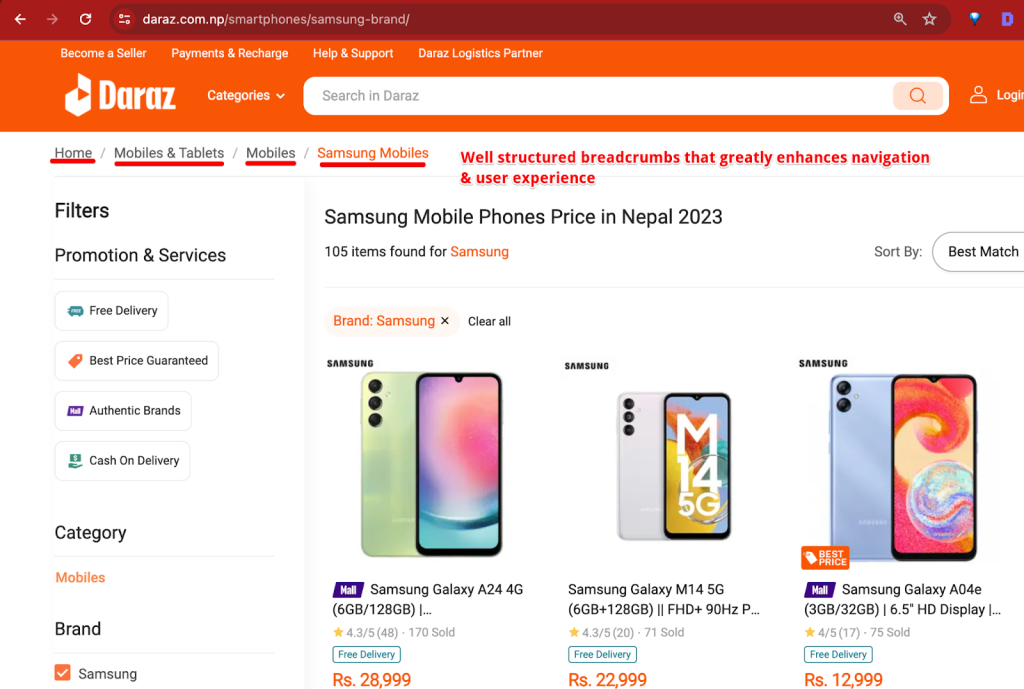

Breadcrumbs

Breadcrumbs are a navigational feature that enhances user experience and site structure, aiding in understanding a website’s hierarchy. Using proper breadcrumbs will make sure search engine bots crawls and understands the site’s architecture more efficiently.

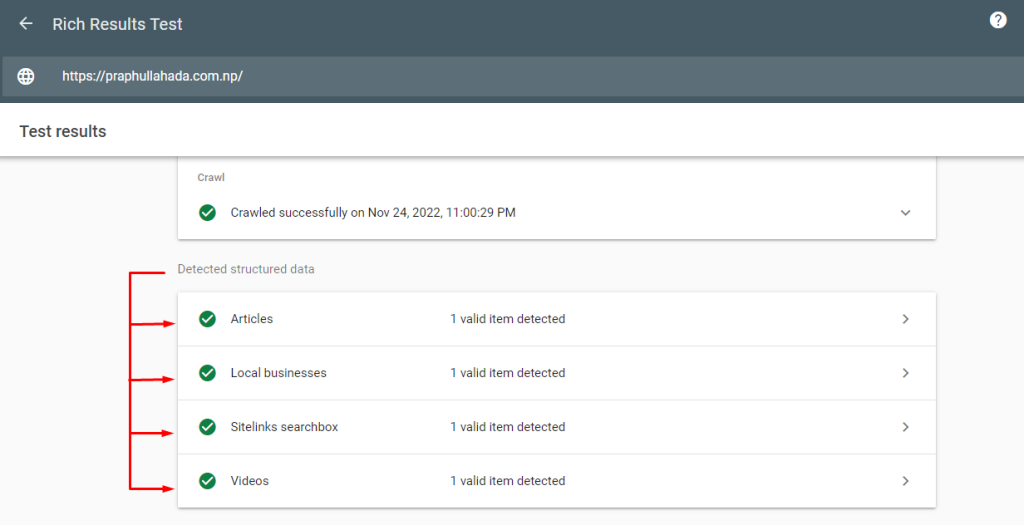

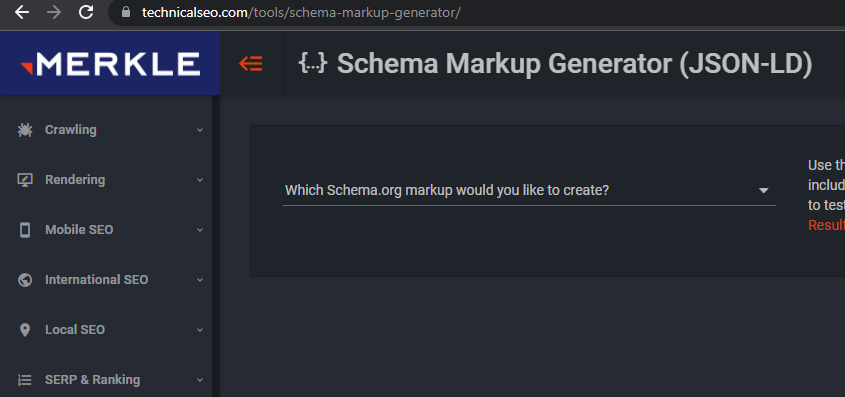

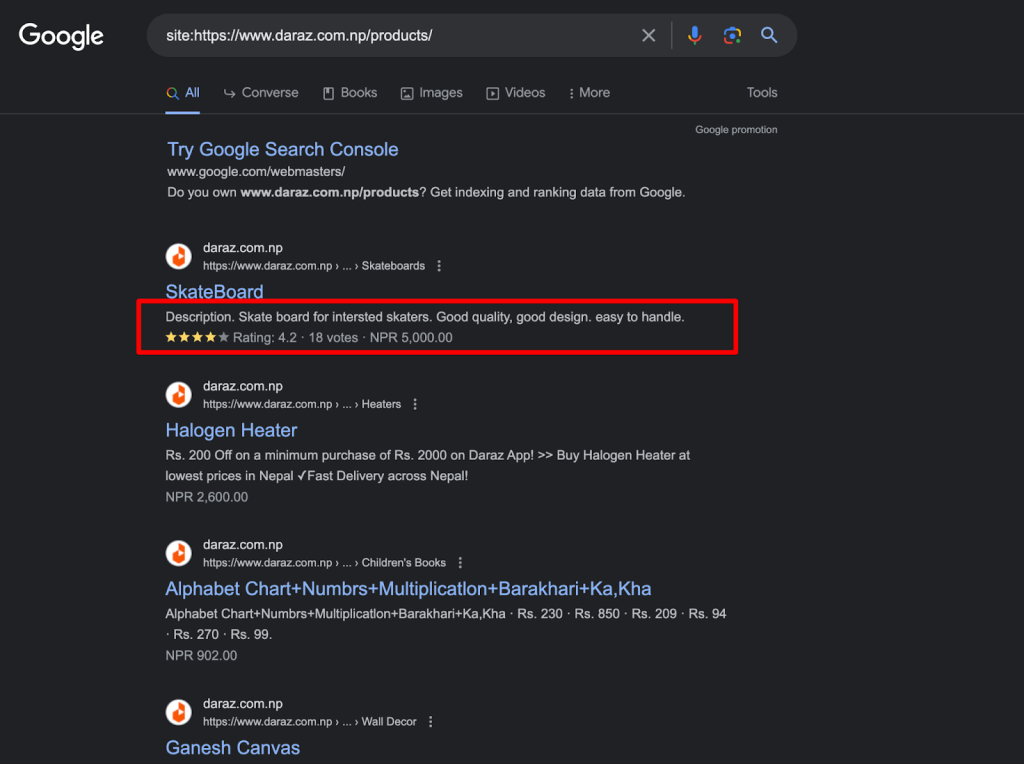

Structured Data

Structured data/schema markup is a code that helps search engines understand the page better. It is the natural language for the search engines to connect dots between the entities and display respective rich results.

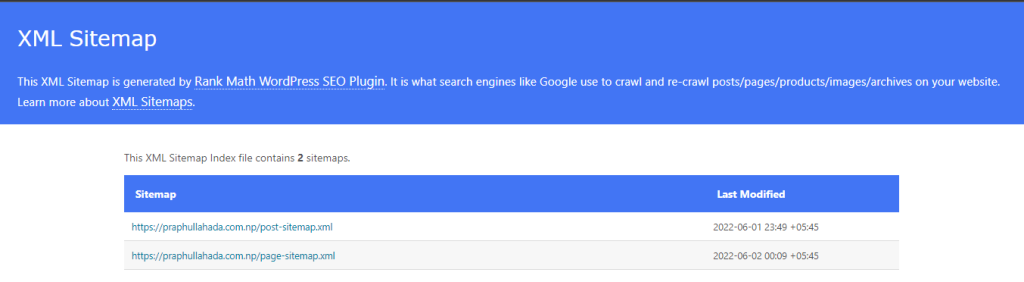

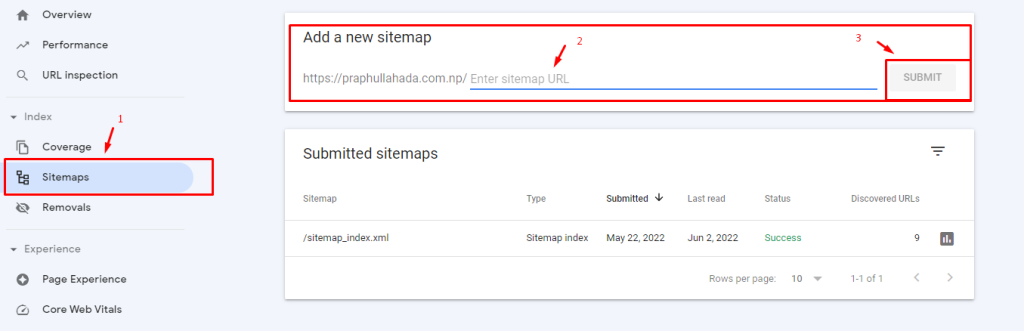

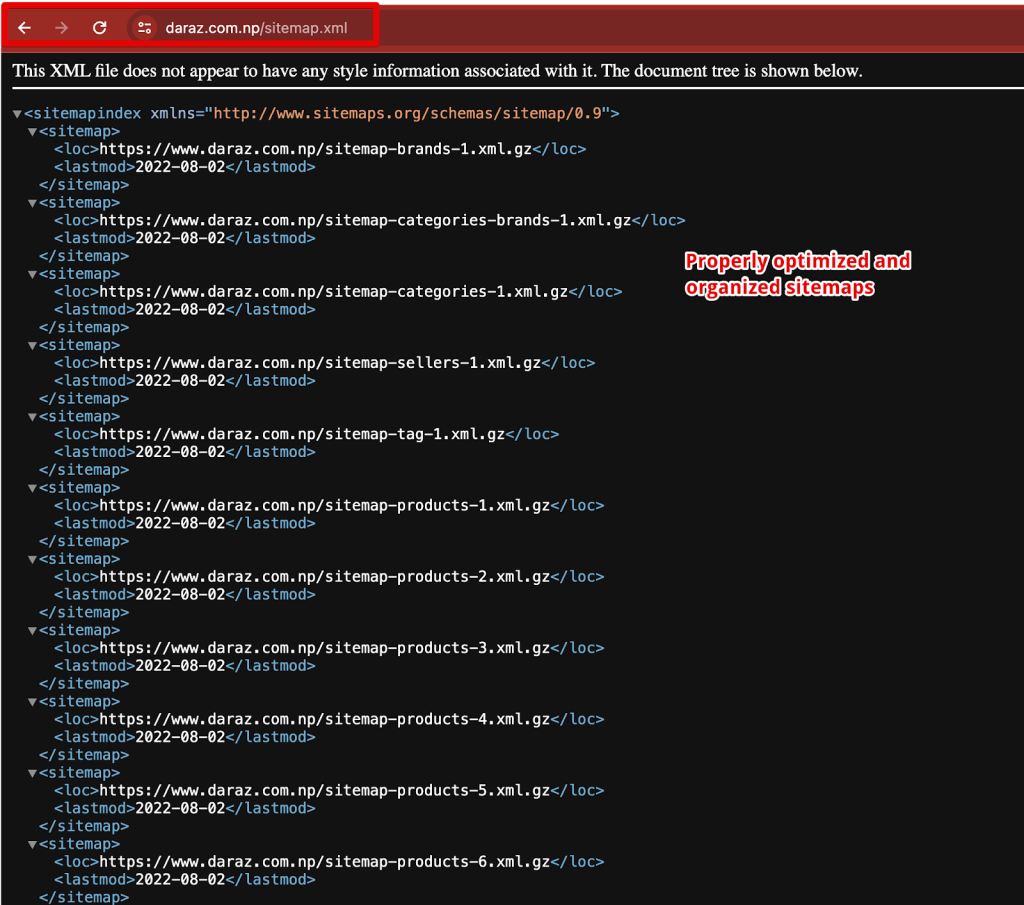

XML Sitemaps

The sitemap of Daraz is well-optimized for search engine bots for several reasons like structured format, Gzip Compression, and love the way they have categorized the sitemap for different content types like products, categories, brands and more.

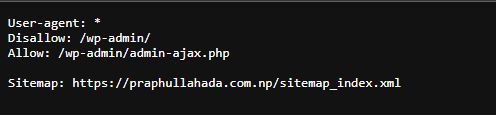

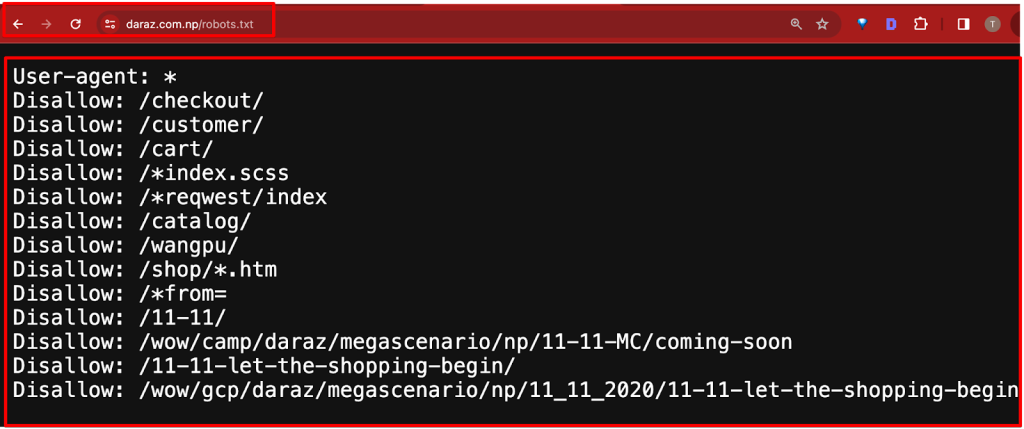

robots.txt

The Daraz’s robots.txt file is well-optimized for search engine bots. It strategically disallows sections of the site that are either not meant for public consumption (like customer profiles, carts, and checkout pages) or are less relevant (like temporary event pages or specific technical directories). This approach helps in directing search engine bots to index the more important and relevant parts of the site, enhancing the crawl budget and the site’s overall SEO performance.

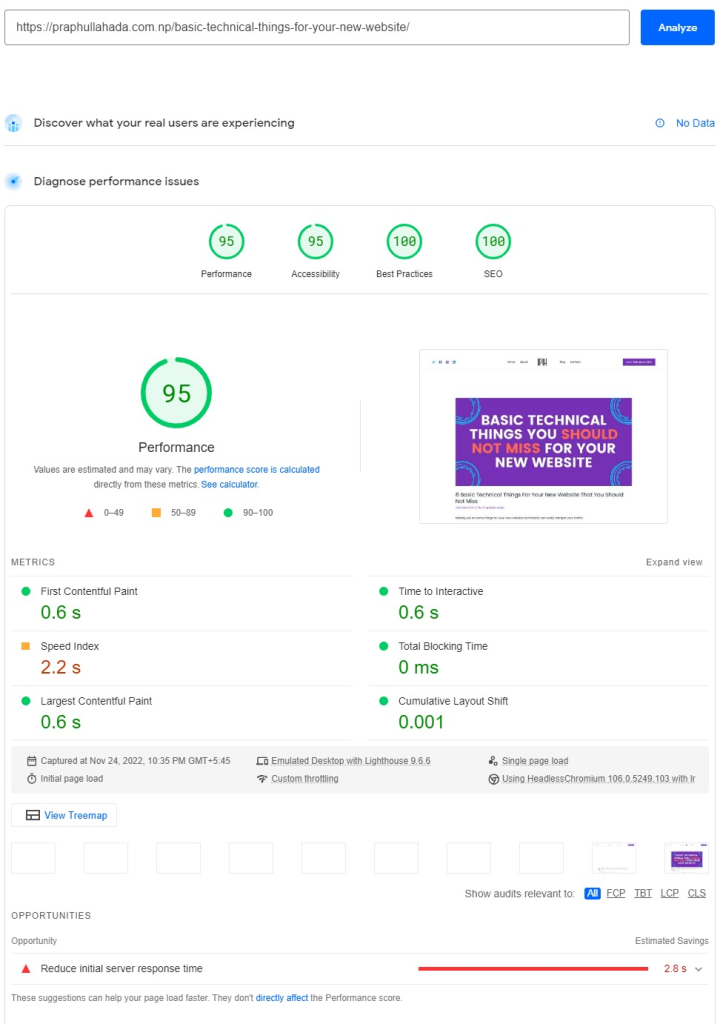

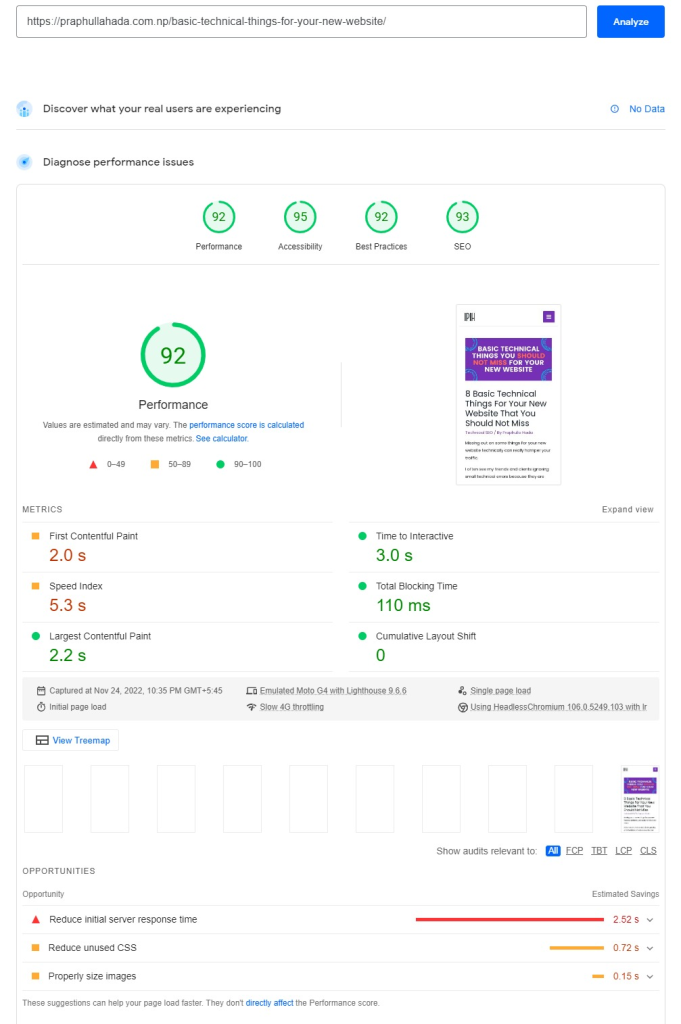

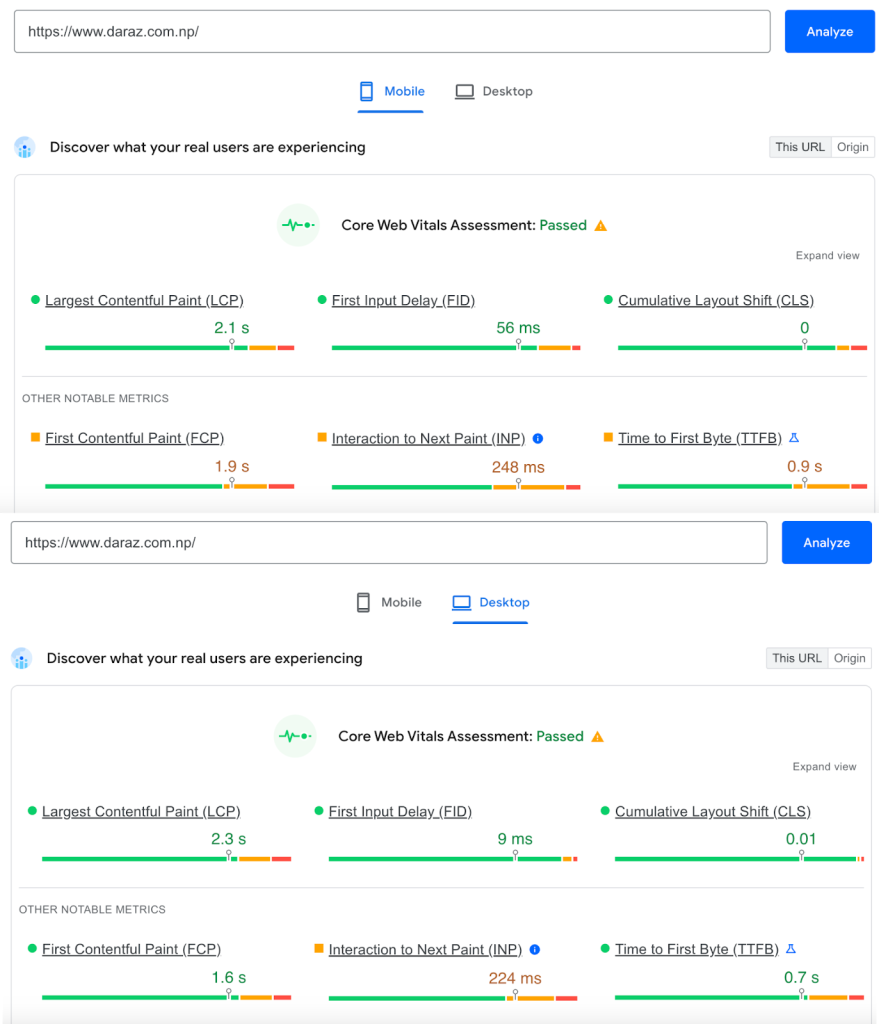

Core Web Vitals & Page Experience

Google’s Core Web Vitals is a set of metrics that evaluate the user experience of a web page, focusing on load time, interactivity, and visual stability. These include:

Largest Contentful Paint (LCP), that measures amount of time to render the largest content element visible in the viewport, from when the user requests the URL.

First Input Delay (FID), that assesses time from when a user first interacts with your page (when they clicked a link, tapped on a button, and so on) to the time when the browser responds to that interaction.

Cumulative Layout Shift (CLS), that measures the sum total of all individual layout shift scores for every unexpected layout shift that occurs during the entire lifespan of the page.

And, the latest addition to these metrics is the Interaction to Next Paint (INP), that assesses a page’s overall responsiveness to user interactions by observing the time that it takes for the page to respond to all click, tap, and keyboard interactions that occur throughout the lifespan of a user’s visit to a page.

These factors are crucial for Google’s search rankings, as they aim to ensure a fast, responsive, and stable user experience.

While comparing CWVs and the page experience of Daraz;

- Pages have good Core Web Vitals

- Pages served in a secure fashion

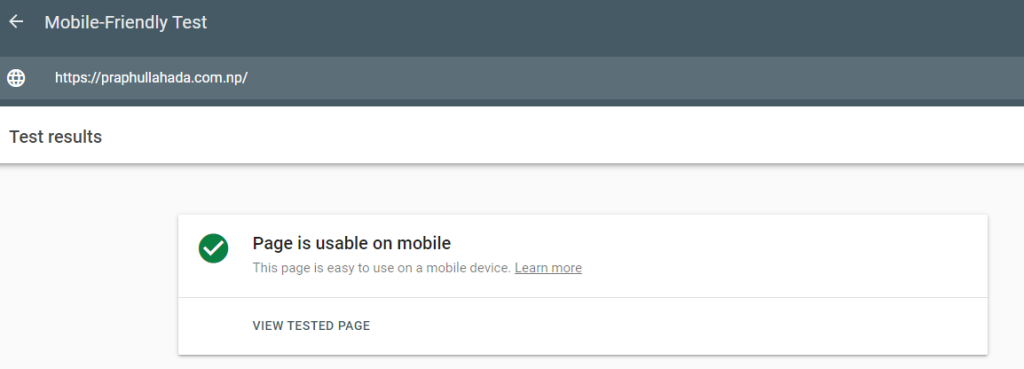

- Page content displays well for mobile devices when viewed on them

- Pages lack intrusive interstitials

- Visitors can easily navigate to or locate the main page content

TLDR;

While Sastodeal shows promising potential in e-commerce, adopting a more aggressive and refined Technical SEO aspects similar to Daraz’s can significantly boost its organic traffic. By learning from Daraz’s successes and implementing these areas of improvement, Sastodeal has the potential to thrive again in the e-commerce marketplace in Nepal.

This post is a snippet of the Technical SEO aspects from the full SEO comparison. Read the full SEO comparative case study here.

![How to perform technical SEO audit? [9 Steps With Checklist]](https://praphullahada.com/wp-content/uploads/2024/06/performing-technical-seo-audit.jpg)